This project explores the realm of computer consciousness, including exploring computer vision, artificial intelligence (AI), machine learning (ML); their associated toolsets, philosophical and ethical considerations.

The keyword to consider here is awareness. What exactly is awareness and how do we evidence it? How can we make the computer more aware of its surroundings, build knowledge from this and respond to external stimuli? How can we make the computer seem aware?

Software: References:

Processing Machine Learning for Artists

openCV for Processing Google AI experiments

FaceOSC openTSPS

Eliza for Processing: openTSPS in Processing

RiTa for Processing openCV

Speech for processing Consciousness

Wekinator Chinese Room

Runway Daniel Dennett TED talk

What does it mean to be conscious, can we explain sadness, can we truly be objective. Is our personality genetically generated and unchanged by real-world experience. Adding a new sense from nowhere such as colour blind people receiving glasses that allow them to see more regular changes in hue.

4 Properties of Qualia, Ineffable, intrinsic, private, directly apprehensive in consciousness.

“Artificial Intelligence is the science of making machines do things that would require intelligence if done by men.” Marvin Minsky.

Eliza Rogerian psychotherapists written in 1966 makes you question what a mind is.

Exerts on conciousness, sensation and what it means to question fate:

These exerts are all from different books each displayed after the cover page of the book. They all question what consciousness is, what we display through our subconscious mind, and what it means to be self-conscious and live beyond our own fates in this revelation. I think that the embodied mind also touches upon this distinguished idea and all the texts link and contrast in different forms which allow us to better understand how our minds link to the realities that we are bound within. Linking the real world we can physically comprehend, and the world which we have within our own minds can be difficult but only allows us to further understand our own minds.

The first text I have created is using opencv within processing and doing frontal face recognition. From this I have made everything not detected as a face 12.5% transparent while faces are fully opaque. This creates a blurred memory feel to the entire sketch allowing the viewer to feel almost as though they are traveling back into my unconcious mind exploring memories through the people within them.

This second video focuses on the same principle, except here, faces have been reduced in order to reflect the inability to remember faces in dreams personally. I only see experiences, and especially with people I have only seen a few times, I struggle to remember how their facial features are comprised.

In these sketches I am trying to reflect some form on fragmented subconscious in a dream state. From what I am feeling by starting this project. I feel as though the whole thing is going to become about how we can regard a conscious, what a subconscious visual really is, and how these tend to link.

Conciousness Here is a link to an essay I recent wrote which focuses on different philosophical approaches to the conscious mind and how we regard our own sentience within modern societies rigid system.

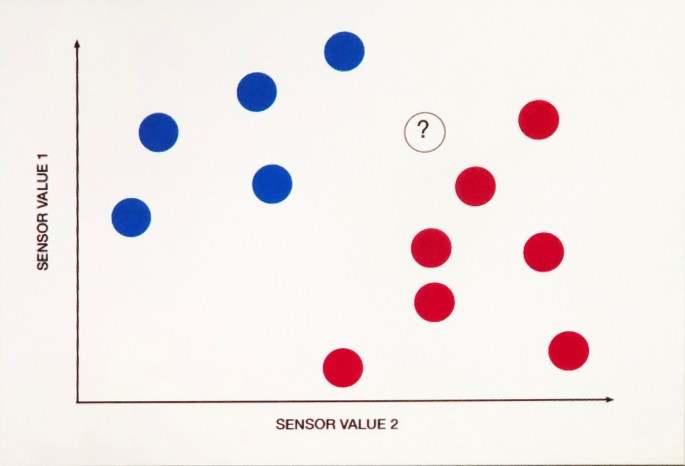

As I am starting to look at machine learning, I want to train data which avoids inconclusively as much as possible. This allows the smoothness of the data to be as high as possible. Here is a diagram which explains how data can almost be uncertain if not trained clearly.

http://andreasrefsgaard.dk/project/an-algorithm-watching-a-movie-trailer/

https://affinelayer.com/pixsrv/

Here is some machine learning images from the runway software. I want to explore the relation between what we verbally derive form ideas and what that verbal description could actually also depict as the openness provides extreme intrigue.

Here are 2 videos, the first tracked using software and the second manually by me. I think the way focus can be drawn even more so via facial tracking is of extreme interest. We see faces so passively that we forget how important they can be to identifying someone, but once we exaggerate someones face by manual or machine tracking, their presence is obviously exaggerated. We as humans always reposition ourselves within space in order to try to better understand objects, but what if I created a work which disabled us from doing this by making the piece instead track us and move relatively negating any action we made.

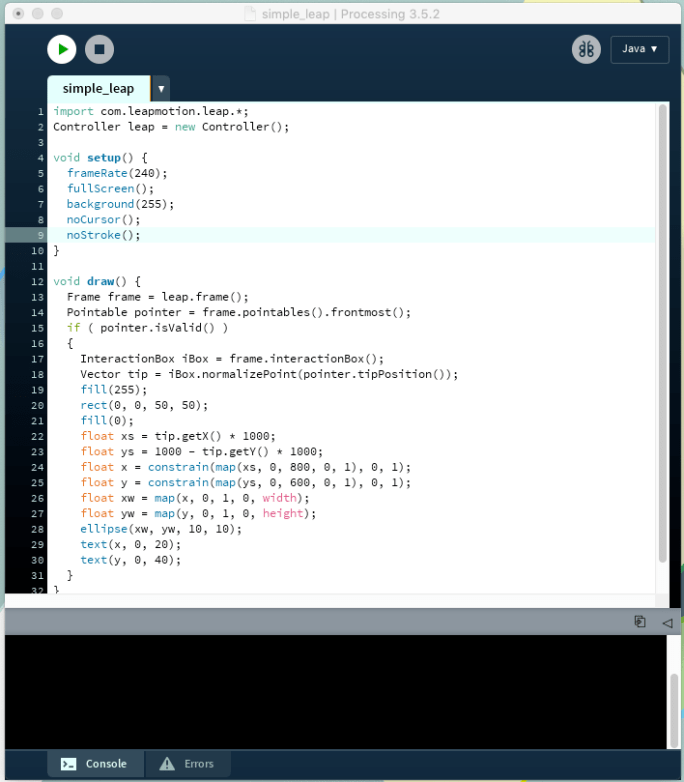

Here I have created a super simple leap motion controlled sketch. One set of variables xw and yw controls the ellipse which allows the user to draw, and the x y floats are flat 0 to 1s which I intend to use to map different things using leap motion control.

Monday 11th Seminar – artificial intelligence going wrong is such a common theme because it creates a non human villain. This is due to the nature of machines seeming to pose a threat to us as humans. It almost seems that the concept of a pure AI is overlooked because any time they are presented within fictional environments, when they don’t corrupt them as human characters due to their extreme personification i.e wallie, Alexa.

Cybernetics “study of control and communication in the animal and the machine.”

m_shanahan_technological_singularity_chapter_5_2015

s_blackmore_consciousness_chapter_1_2005

captcha as a means for determining bots Captchas are used as way of computers to determining whether or not bots are trying to breach its system which is interesting on so many levels. However often these systems actually have started to become so easily understood by computers, that they have a higher success rate than humans meaning that these tests have to constantly be reworked. Ironically we are training computers to recognise things by having checks to recognise that we aren’t computers.

Google are using systems to gain intelligence from us as a form of currency which enables us to use the internet infinitely as a free service. Adds are regarded as invading our privacy on a similar level but actually facilitate a free service.

If robots become so sentient that they gain awareness of their nature as a lesser being as almost slaves to humanity, will this breach our human rights as they can easily pass tests such as the turing test.

Where can we go with this:

Can we create a bot which appears to be aware of it’s lesser status.

A system skimming memories which appears to allow access to memory banks.

A bot which actively hates humanity.

The technological singularity (also, simply, the singularity) is the hypothesis that the invention of artificial superintelligence (ASI) will abruptly trigger runaway technological growth, resulting in unfathomable changes to human civilization.

Nye Thompson, The Seeker, looking through web crawler images, taking them and using image recognition to generate these into a huge chart of all sorts of objects openly visible on the internet.

Tom White creates work attempting to be recognised by machines as objects but actually forming very abstract visuals when viewed. The Treachery of ImageNet

Philipp Schmitt, Unseen Portraits 2, 2016. Altering a face automatically from a portrait until it is so shifted that it can no longer be read as a face by the software.

Adam Harvey, CV Dazzle Look 5, 2013. Hiding faces with looks which aim to use a-symetry to mask them. Blocks of colour on a face, hair covering an eye ect.

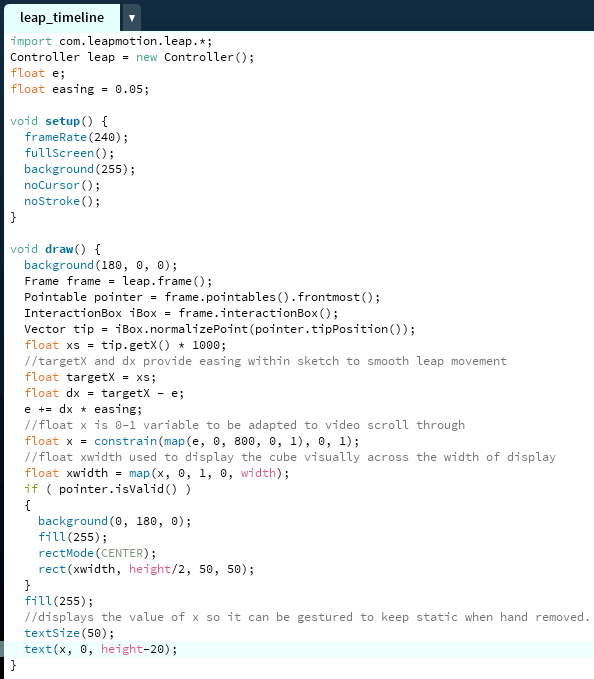

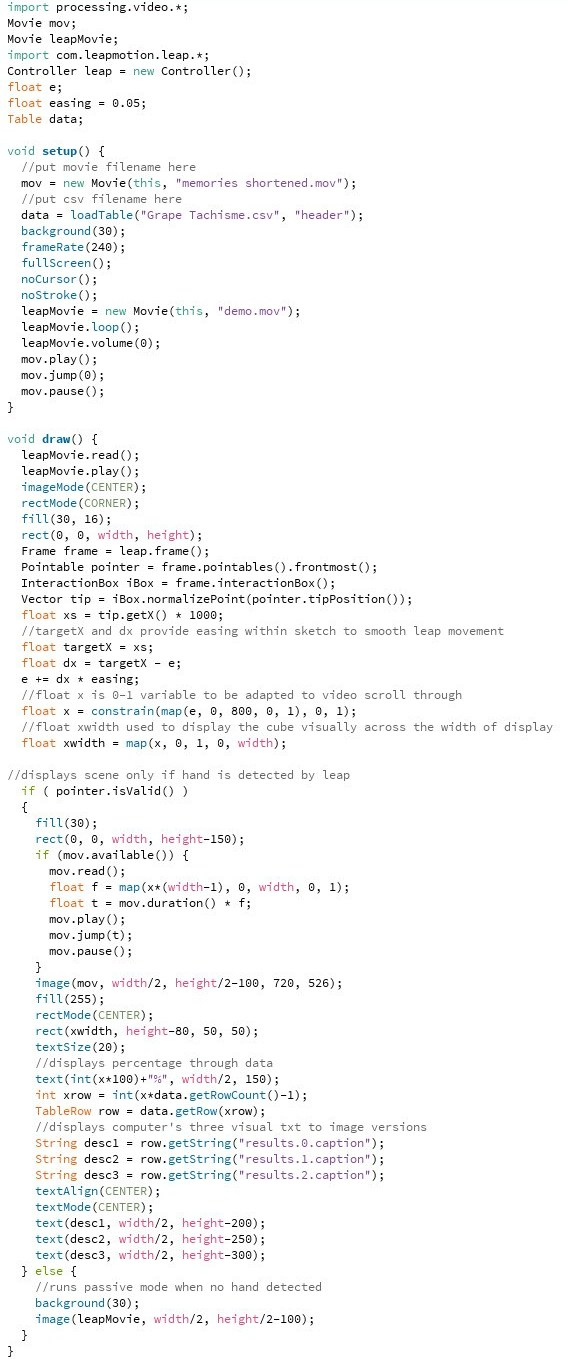

Here is an updated version of my code where the whole code has been simplified and it simply scrolls left to right on the screen. I want to use this to map video to the same dimension allowing video to be scrolled through as some form of conscious stream.

I have added the video into the sketch, as well as putting the video data through runway processing in order to gain objective information from the video. I want to now experiment with what video I put into the sketch to derive where I intend to go from here as this will heavily push the direction of it visually.

The above video is a clip which shows up when no hand is detected in order to get the user to interact with the digital interface of the leap motion with their hand.

This is how the sketch appears currently within processing. It plays the video, and alongside this puts the CSV. text which was recorded via runway. I also have a scale from 0 to 1 at the top which aims to show the user how far through the data they are, I could change this number to represent a percent in a more clear sense, but I like the somewhat abstracted nature of the whole sketch, as if peering into a computers inner thoughts as an objective consciousness.

Here I have posted the new video which am using within the processing sketch. I also slightly altered the code so that the row count is found automatically and therefore only the .csv and movie need to be added to the sketch rather than other code edits. I think the code is quite sublime however I want to fine tune it and simplify it further if possible. Here is the code as it is currently written:

This is a second iteration of the video which I originally intended to use. However, when I experimented with it within the code, the length of it was too long with each of the seven segments being 90 seconds. Therefore I shortened each segment to 30 moving the movie from 10:30 minutes to only 3:30 making scanning through it easier for both the user and the computer.

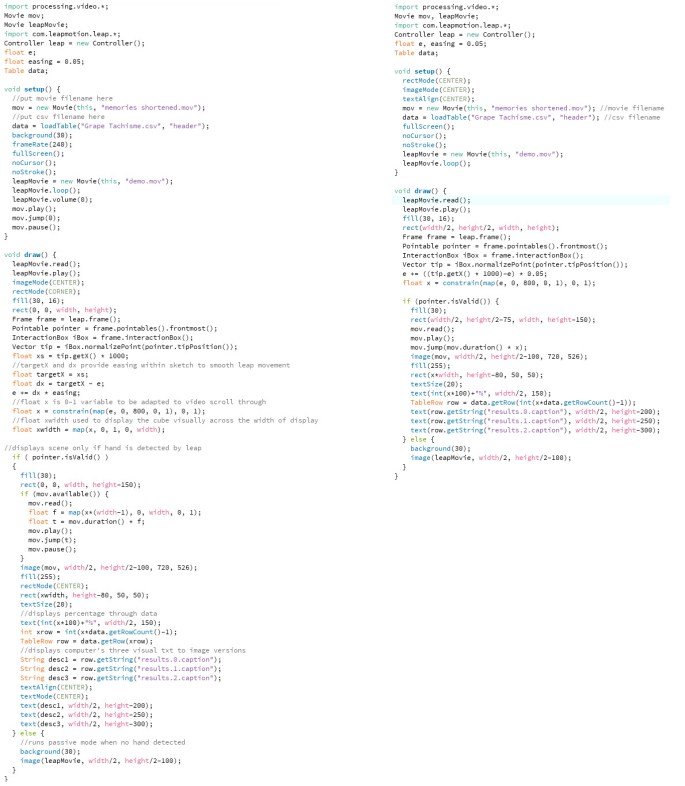

Here you can see how I have shortened the code without changing the outcome. This makes it run more smoothly due to less operations being performed within the draw function as well as generally being cleaner to understand for a viewer. It also allows me to edit what I am aiming to show with more clarity within the code output.

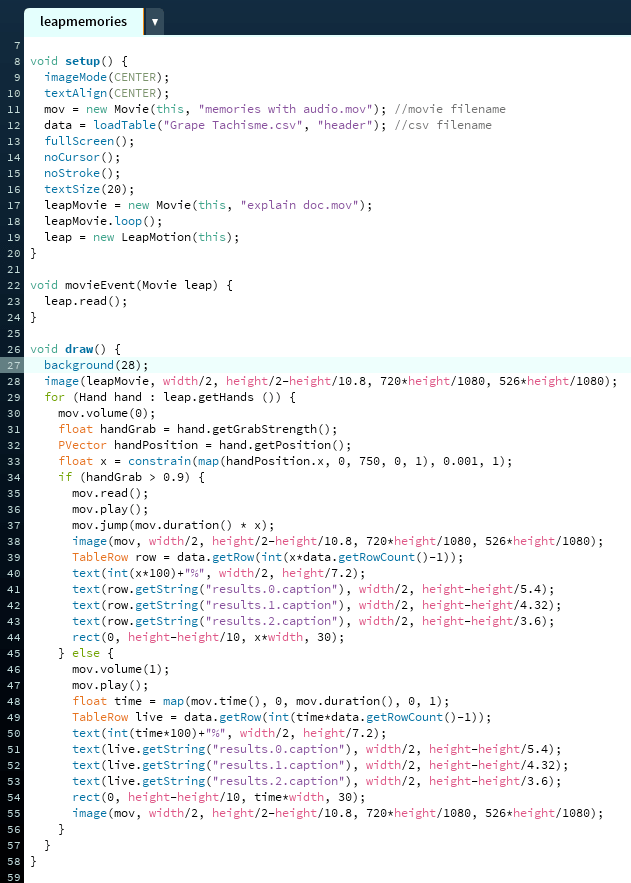

Bellow you can see how I have implemented a grab gesture within my piece. This allows me to scrub through the video in a much more natural way. I had to change the leap motion plugin which I was using within the sketch in order to add the grab function. However once I did this, and changed the appropriate sections to the alternate libraries method, everything worked in an even smoother manner.

I had to use the time function to convert all the text and load bar at the bottom to be relative to the videos current time instead of relative to the leap sensor when the palm is left open. This allows them to scale gradually as the video plays making them feel much more natural. I did this using the video time function and dividing it in several different forms to allow it to flow smoothly off of one variable. The altered sketch can be seen bellow.

Here is a newer iteration of the hand video displayed when the leap motion detects no hand. This allows the viewer to better understand how to interact with the sketch as well as creating something to draw the viewer in before they actually chose to interact with the sketch. The new version feels more dated in an aesthetic way which I prefer as well as explaining the grab function and sliding function in a clearer way that before.

I feel that I want to implement more machine learning aspects such as face tracking within the data in order to express the computer vision of consciousness in a more active and visible way.

Here you can see how I have run my video through runway’s skeleton mesh detection. It has produced models for each person which provide a very machined look. I feel that when I compile this within my main sketch instead of the original footage, it will reduce the nostalgia of the moments and memories I have selected to an even greater extent.

Originally I was going to implement this into processing via a csv which I would generate from runway. However when trying to implement this into processing, not only did I realise I could achieve a more elegant aesthetic other ways, but I also found that the amount of individual variables was so inordinate that it would be an ineffective use of the CPU and graphics of my computer. Instead I decided I would simply record the output as video, then overlay it on my footage using a keyer, only allowing the video to show through where the mesh appeared on-screen. This leaves a slight amount of the humanity within the memories, along with the sound, somewhat allowing the moment to be remembered for what it was.

Here is a demonstration of the final piece working in full. It aims to represent a computer primitively learning to palm read. It is displayed reading some form of fragmented memory as the user spans their hand across the leap motion which poses as a form of scanner:

https://en.wikipedia.org/wiki/Psycho-Pass

https://en.wikipedia.org/wiki/Minority_Report_(film)

Sense and Sensibility Final Explanation:

Binary Palm Reading:

The piece I have created is entitled Binary Palm Reading. It aims to mimic the concept of palms being read by a human and them telling you of your past and future experiences. Often these readings can be quite vague and the links tenuous at times. This is the same with this binary version. The user clenches their fist to select a position within the reading, opening their hand to delve deeper into a moment of the reading. This allows them to see a form of computer meshed set of people experiencing memories along with different text interpretations of the scene. These compiled form experiences which cannot be positioned within any form of time frame to the viewer, but may represent something they may have experiences or are going to experience in some form. It could be an estranged link but the link exists.

This piece not only aims to mock the idea of reading someones entire past and future from such a small element of their physical self, but also aims to gesture towards a future, where potential computers could do that very thing. Minority Report and Psycho-Pass are two examples of real world shows and movies that display this extension. Computers becoming conscious is always regarded as a dangerous proposition. We can already see how using machine learning techniques we can understand imagery and determine people within video and image files. The software I have created within processing physically displays the possibilities of this, and then uses this to create a metaphor for how far this ability could be pushed in the not too distant future. The danger is there, and the question is are we already too far down the path of creating conscious digital minds to stop it.

The software I have created uses leap motion and seems relatively harmless in a conceptual sense at first glance. Only appearing to represent a very select piece of data. I chose the video clips from some of my fondest memories of childhood and implanted them into a cold and digital environment, through machine learning, I feel the humanity of the clips has been reduced to basic data. The memories made so devoid of character that anyone could assume they could be their own, which is exactly the point I want to represent and compare to a simple palm reading. In an objective reality, which you are viewing in this piece, there is a lack of association and interest, making us feel disconnected with it’s intricate details and viewing it only for a moment before losing intrigue. As soon as character is restored, we instantly find interest in each person and their involvement within the story, however insignificant it may actually be to ourselves.