For the past couple of weeks, I have been working alongside Joseph Lindley and David Green to create a space in gather. This is an interactive paper and social space which has been adapted from a previously rejected conference submission. The three videos above detail the introduction to the conference itself, where the gather space will be featured on the 12th of May, the video introduction the the whole concept of the submission, and a third video which is a short demo of what the space is actually like to explore.

This is a link the specific gather space which you can explore at your own leisure. There are many Easter eggs and things to find hidden within the paper, so do investigate and delve deeper to your hearts content if you want to unravel everything within the space!

Using 3D scanning is something which I have done many times before through the use of Photogrammetry. Using a structure scanner on the iPad, which is like an add-on version of the LiDAR sensor available in the newer standard apple devices, instant 3D models can be created using the depth detection along with the camera in a more advanced form of photogrammetry.

The animation above is created using this technique to test the detail which it provides, along with the integrity of the meshes to see if the liquid would flow straight through the mesh due to improver vertex orientation. This model worked really well, and although in future scans I may want to try to remove gaps in the software before exporting, or scan every single edge, for a first test it worked very well.

Scanning whole rooms is also something which I would like to do, along with being able to convert these into accurate models of the spaces through paid services provided by companies, it is also really useful to be able to make augmented reality like experiences within a virtual reality headset.

https://canvas.io/viewer/m1vkR6s9

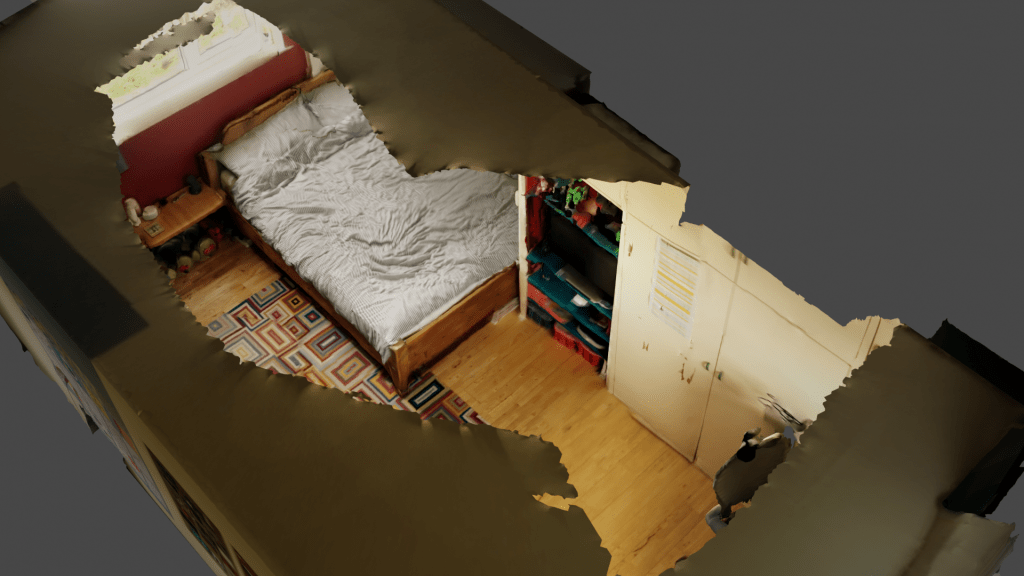

Here you can see one example of a 3D scan of my bedroom. I used it as a good starting point, as it is something I have 3D modelled in low detail before. Also as currently, it is the place where I do most of my experiments, both in animation and VR it seemed very fitting. I really like the ability to see the room from a spaced out view which usually isn’t possible due to confines of physical existence. However joining all these models together into a full model of my home could be incredibly interesting to play with across several virtual spaces.

Experimenting with different ways of editing and representing the mesh which is produced from the iPad scan, above are several different re-meshed versions. The video bellow shows those mixed together into a representation of the digital and physical crossover my bedroom currently represents, especially due to working on my PhD from there most of the time due to the pandemic.

The liminal nature of this space isn’t visually apparent, but when considering it as a none physical space, the boundaries between my room and cyberspace begin to crack, as does the model itself from the slightly faulty 3D scan.