Looking at my last thoughts from the first section of Design domain, I decided I wanted to create fractured artifacts which are supposed to be physical manifestations of emotions, however I feel that my idea has somewhat progressed. I want to make the artifacts some form of live object.

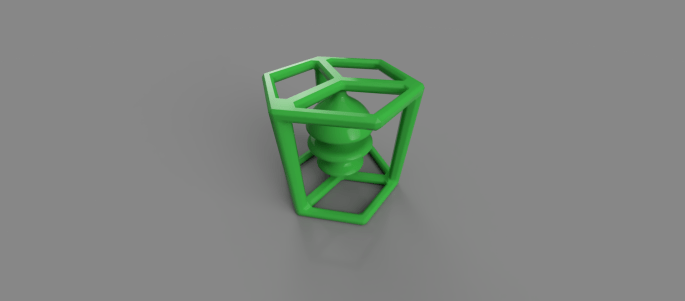

These objects can then be placed on a reader of sorts which would understand them and inform the user of their emotional link. I want to use green printing coils to allow me to use chromo key techniques when working with them in a video based environment. I realise that from this point I can always paint them after sanding them to allow the paint to stick to the surface.

I also want to look at the method of communication, I could use a system like NFS in order to trigger certain text and video when the object is scanned in a sense.

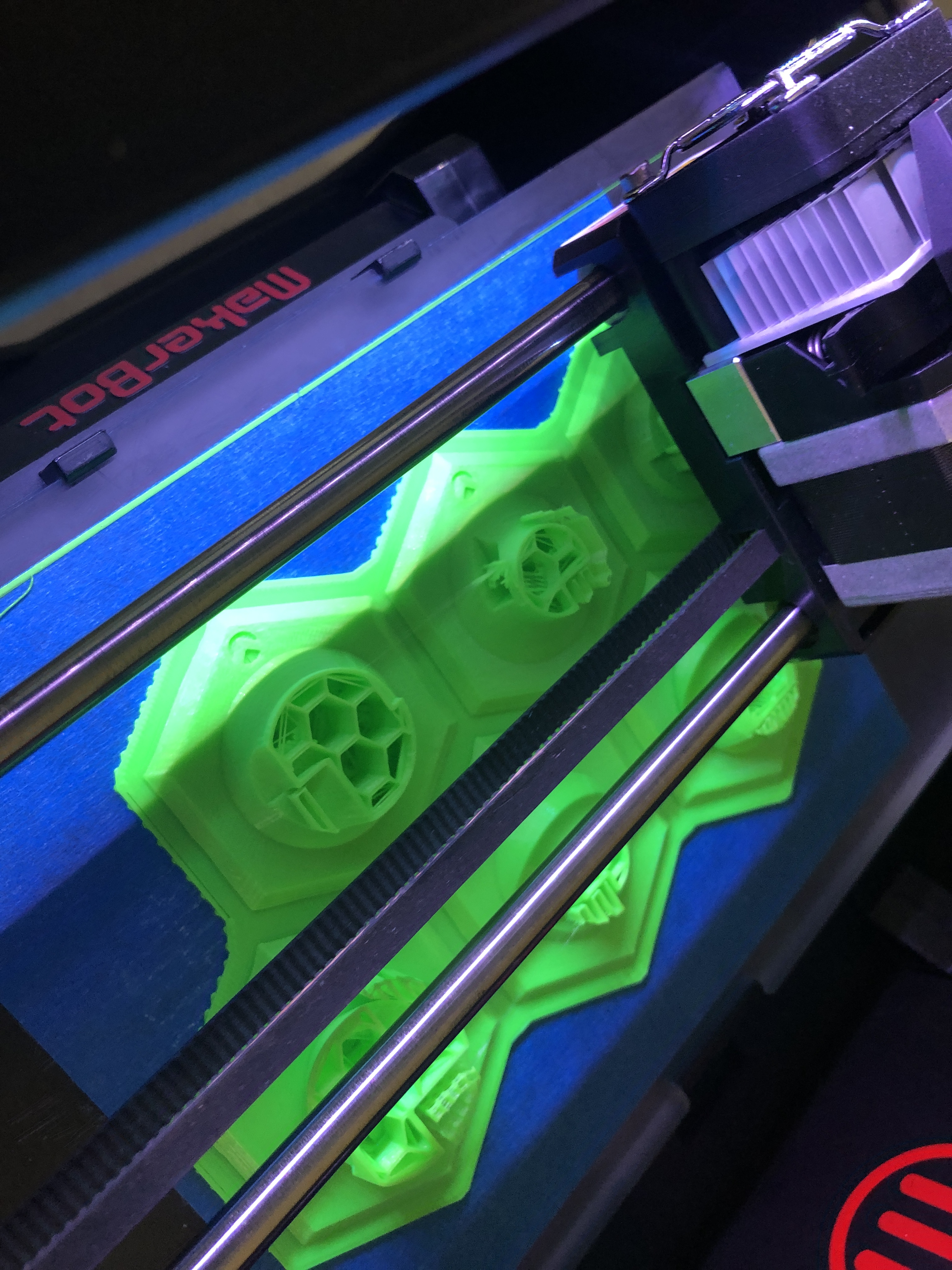

I have obtained this printing material which I intend to use for the production of the artifacts. I now need to decide on shapes I intend to create as well as the communication system which I could use to link these to a digital environment such as unity or processing and allow the object to be recognised.

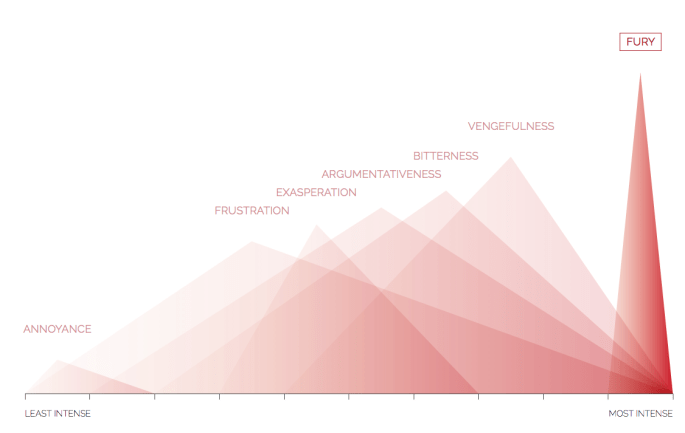

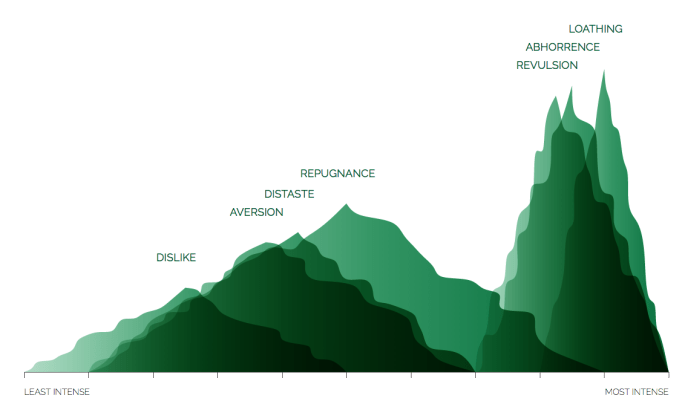

I found this article entitled The Shapes of Emotions which discusses the shapes of different emotional variations which tend to fit within diffrent sub categories. I feel that the use of colour and shape within this work isn’t absolutely vital as the intention is to create a fictional universe where these artifacts were engineered by humans to allow the user to feel a specific emotion. Nevertheless the chosen colour will need to evoke a certain variation and response from the viewer, which will need to engage them and intrigue them enough to want to place the items on a reader.

Here is a whole nother set of images relating to how shape and colour can express and evoke emotions, representing them in symbolic ways. As with everything I researched previously, and with all the sources here, it seems to come down to personal opinion a lot. Since I am trying to display a version of emotion which would almost be machine-made, it doesn’t need to be incredibly grounded within scientific ideas, but instead could easily come from my personal representation.

This video here explains how I intend to create the objects then scan them in order to make their point cloud maps and display imagery around them in augmented reality. Before I can really progress with this, I need to first generate some form a object to work from. Here are several earlier itterations on shapes which I thought I might want to use as the artifacts. However they seemed a little too rigid in some ways.

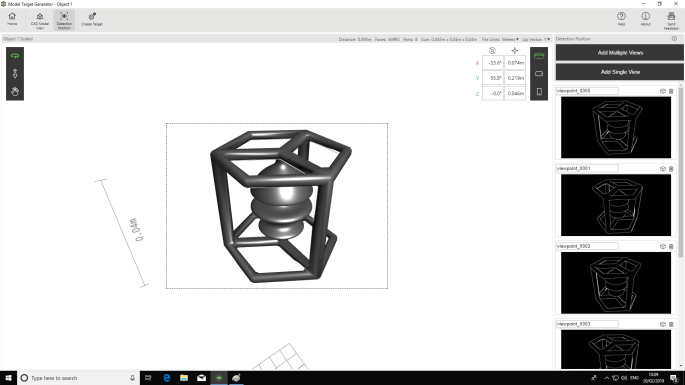

These images show my progress within fusion360 by autodesk in producing the first test version of the model which I intend to 3D print to begin image target tests within unity.

Here you can see renders with material applied. I want to achieve a relatively polished finish with 3D printing. I have done testing previously with different grades of sand paper in order to figure out how to produce a relatively smooth surface on the prints.

These images along with the short video show the printing of the model. After it was completed, I stripped away the support material and did some very low-level sanding to create a somewhat accurate model of the object. From here I need to go into unity and attempt to connect up the model with a cloud map and vuforia to check the system I intend to implement will work.

Here is a video showing how I tried to use ARKit functionality by apple. However I really struggled to get strong recognition with this system.

With it you create a point cloud map which can then be pushed into unity from supposedly allowing the object to be tracked in augmented reality. However what I found when attempting this many times, is the object recognition level is very niche and only recognises the object in the exact lightning conditions originally used and from very few angles. I instead now aim to use another system which derives the recognition from an stl. which is more optimal considering I am creating these objects in 3D printed form from stl. files. The symmetrically of the objects could pose an issue in this process potentially as the objects front cannot be easily defined.

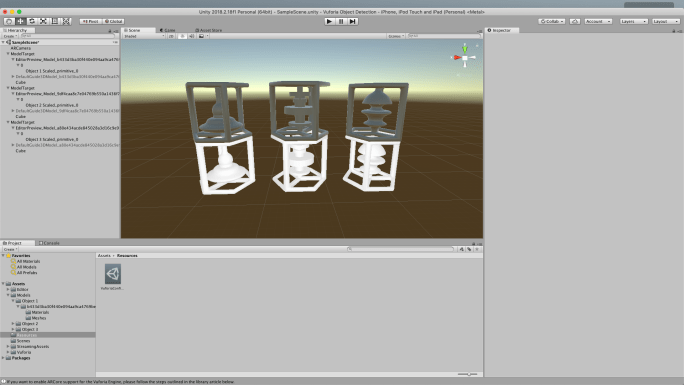

Here I have used Vuforia’s Object Target Generator to create a trackable object within virtual reality. From this I implemented it into unity and then created a prototype app which allows objects to be tracked which have been derived from stl. files. Here you can see a clip of the prototype in action.

After experimenting around with the augmented reality and recognition of object targets, I think that potentially, the targets may just be too similar to distinguish from one another. Therefore I am going to create new models and attempt to print and create them to look more separate while still remaining synonymous with one another.

Here you can see a whole new set of models which are more individual. I decided to remove the housing and the mirror or symmetrical lines in order to make them more recognisable and distinguishable from each other. I will recreate the app and see i they can be seen as individual targets in a more immediate way.

I also added a fake form of branding to the base of the models to give them some sense of corporate ownership which I want to form in order to generate a sinister theme.

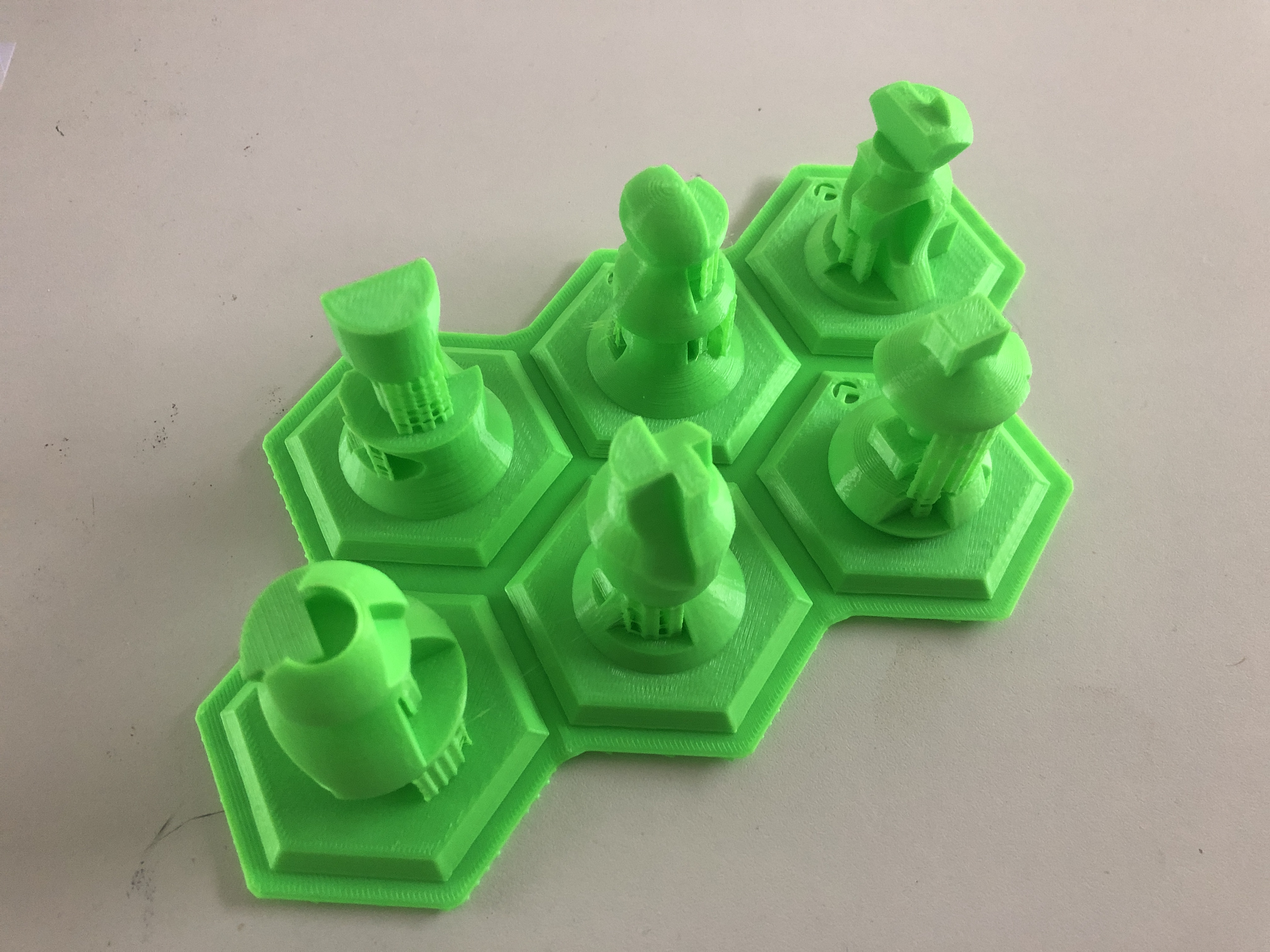

Here I have made a new set of object targets which should hopefully not only allow the user to distinguish the front side of the objects, but also allow them to be more easily defined and scanned due to being able to tell the user to position the logo indent at the front of the camera. I have also created a bed layout for the 3D printer to allow them to all print at once, as well as a laser cuttable segmented board which the pieces can sit in for presentation purposes.

I need to create videos to go alongside this once I have the image targeting working to a reasonable degree which will have transparency and appear in the augmented space to allow the user to further understand the concept I am trying to drive. This will explain the idea of Emotico as a future based conceptual company selling emotions in an attempt to question people’s reliance on emotion and our disbelief in the link between emotion and money so often referenced in popular culture.

Here I have created various ui elements including app icons, overlays and logos for my fake company. This is to allow me to generate a sense of brand identity which can flow throughout the app. I have a video which explains how to use the app and can be popped up and down by clicking a button at the bottom of the screen as well.

Here you can see a honeycomb based design which I intend to use to place the objects within by laser cutting the shape out as well as engraving the logo into the surface under each piece to again form brand continuity.

Here you can see some of the process of 3D printing 6 of the final pieces. I added a small logo to the front side of each piece to allow the user to orientate them correctly when using them as well as creating 3 unique designs for the final set. I feel the vibrant colour now fits well with the app and will allow the targets to stand out in any environment to help the augmented reality process.

EMOTICO Here is a video of my app working as a well as a pdf which shows the branding which I want to display alongside the app. I want it to continue the brand flow which I am creating and also hint at the satiric nature of the app. What I mean by this is that some people may not understand that the app and products aren’t real and that they are mocking the idea of being in control of emotions. Therefore the brand labeling shows a hint to how preposterous this idea is.

Here you can see stand I have made for the pieces to sit within. I hope that this combined with the other earlier branding gives a sense that the whole setup is some form of early tech expo concept for emotional artifacts and you as a user could invest in the company or the product. This built up satire makes you further question it’s deceit when you realise it was all a rouse.

Here you can see stand I have made for the pieces to sit within. I hope that this combined with the other earlier branding gives a sense that the whole setup is some form of early tech expo concept for emotional artifacts and you as a user could invest in the company or the product. This built up satire makes you further question it’s deceit when you realise it was all a rouse.

These videos show 3 of the 6 overlays created for the augmented reality experience which display how the artifacts represent emotional compositions. I quite enjoy the loss of understanding to how these objects can be consumed as its main and only floor as a product, because this is the tipping point for the realisation that the whole setup is fake. You cannot consume emotions in such a direct form as represented by the fact that no way is given to consume these vessels.

Here you can see the final intended layout for my app as I want it to be displayed at the design domain open studio exhibition. It looks somewhat expo like with the branding, and somewhat hacked but clean feel to the whole product. I have the phone on a wireless charger which I can then leave in guided access mode. I also have all the artifacts on a stand next to this allowing them to be picked up by the user.

Here you can see the final intended layout for my app as I want it to be displayed at the design domain open studio exhibition. It looks somewhat expo like with the branding, and somewhat hacked but clean feel to the whole product. I have the phone on a wireless charger which I can then leave in guided access mode. I also have all the artifacts on a stand next to this allowing them to be picked up by the user.

I have added a hand to the overlay as well as instructions to place the device in your palm. I feel that not only does this help in the recognition process, but it also allows the user to feel somewhat more connected to the object when they proceed with the interaction and engaging with it through the app.

This is a dem of the app working in real space on my phone untethered via exporting in xCode with a screen recording of the whole process in sync on the right hand side. I feel this further shows the app functioning as well as helping me to better understand how the user may interact with the whole setup at the event.

Bellow is my final documentation video along with my exhibition explanation. I feel that overall this project works out extremely well and in almost all areas has a completely polished finish. There are some spaces where I wish more could have been added such as a deeper ui and overlay experimentation process. However I feel that the ui does fit the app well and certainly adds to the whole theme. I think the ideology behind the work as a whole also works really well and the synergy of satire and a real app really ground the whole piece and makes the viewer relate to it in a much stronger way.