After casting the objects at the end of last week, I went in to break the clay away from the moulds. This worked with varying success. On the models of pure clay, it was really easy, and the clay even provided the look of dirt onto the plaster which i really think looked really appropriate for the fact I was trying to make them look like future fossils.

However the main issue at this point was the fact that the 3D printed moulds couldn’t be extracted from the plaster. This was due to the fact that the plaster had expanded to some extent, and having both bodies being rather rigid meant that they there was no room for flexing to extract them from these moulds.

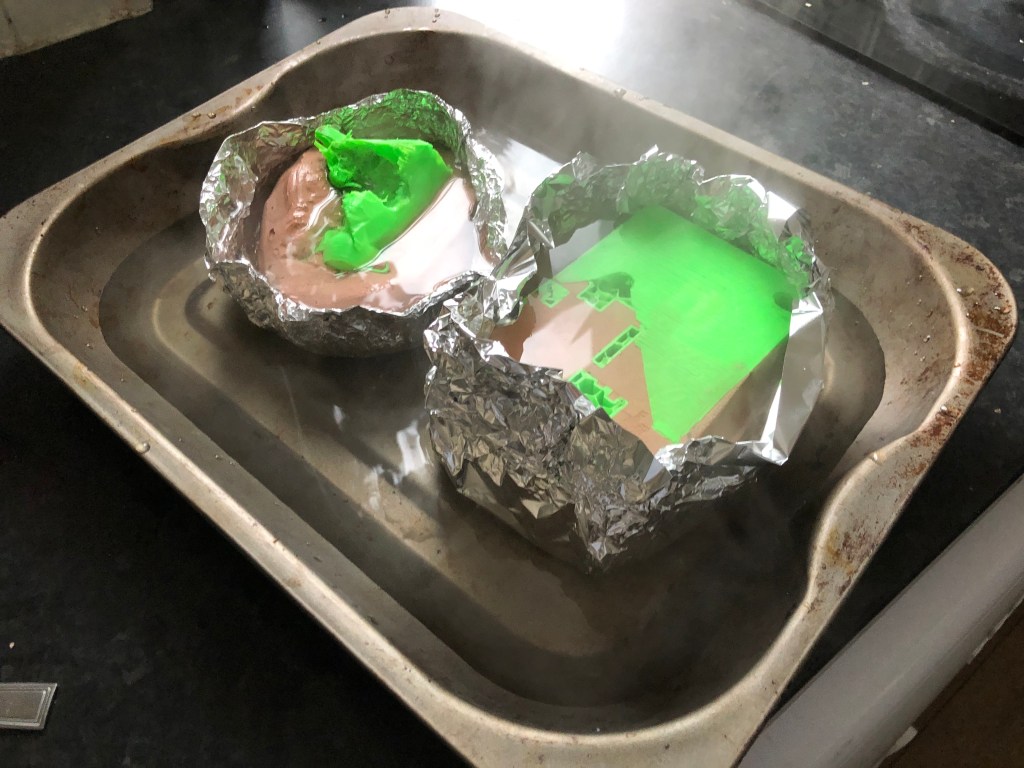

Thinking about the materials I had used, I decided to attempt to use heat to break the models apart. As the plastic used is non toxic, I put them in the oven at around 250 degrees which I hoped would make them flexible enough to break apart. Surprisingly this worked really well, and although it wasn’t the easiest process on some of the more intricate moulds, it worked really well to make the plastic soft enough to pull out and skewer with forks and knifes to get leverage. I filled the pan with a large amount of water to submerge the models. This was because I know how brittle plaster can be when baked, so I wanted to keep it really hydrated in order to avoid this. The hardest part of this was working with soaked scolding hot objects which trying to pick at them but this eventually prevailed.

Here you can see the results of this process. The casts came out really well, and although looking back I have many things I would have changed, these will still be good to take photographs with once I make them dirty again so they appear like found fossils in the wild future. I would add more colouring to them as well as more grime, dirt and rock pieces within the plaster itself to break up the clean look of the plaster. I would also try to either use a wax 3D printer, or a lower melting point plastic for 3D printing to ease the process of removing the plastic from the casts themselves.

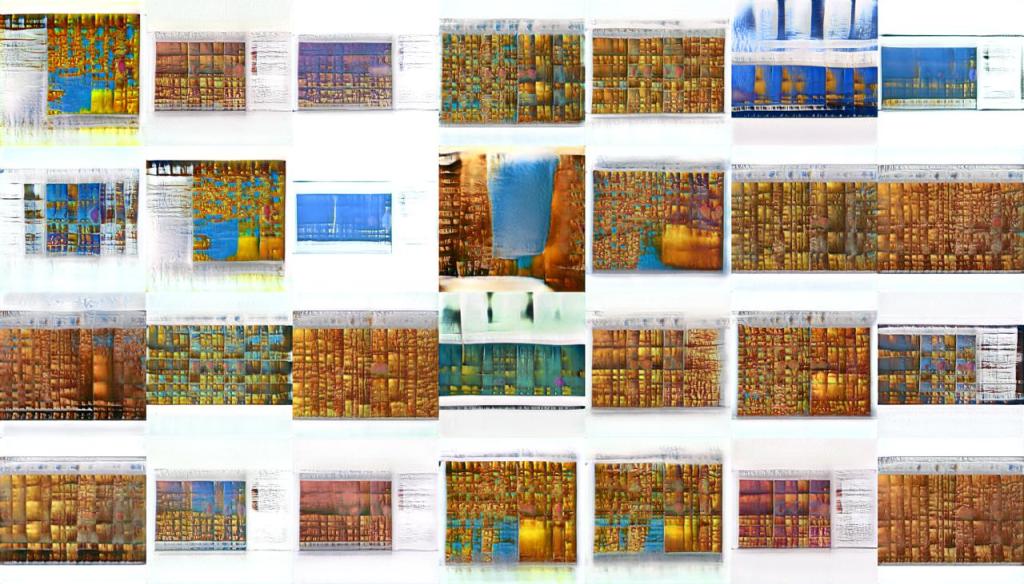

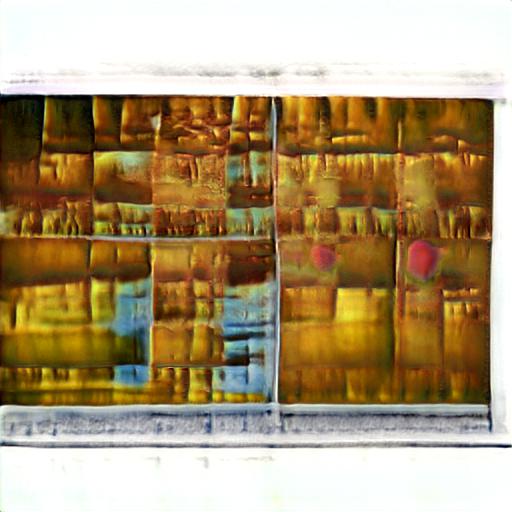

Here you can see my continued exploration with machine learning within the runway environment. I am attempting to train models on different forms of materialistic value. The way we create objects supports our materialistic tendencies. Therefore I want to comment on this on varying levels, seeings which visuals express this in different ways. Here I have used the files from the GrandPerspective software which I previously explored on my blog. I fed this into an existing trained simulation which learned what nebulas looked like. From this I drew out very gridlike, yet strong images.

Here you can see this process as the machine learns how to understand the images from GrandPerspective as its new base for understanding.

These could be prints in themselves, but when you watch the model run itself, you can see how cell like it has become. It resembles heavily the images of plant cells under a microscope. Data embedded with flexibility almost mirrored life itself on its lowest levels. This in some ways is quite terrifying but provides rich visuals which comment on the nature of data itself.

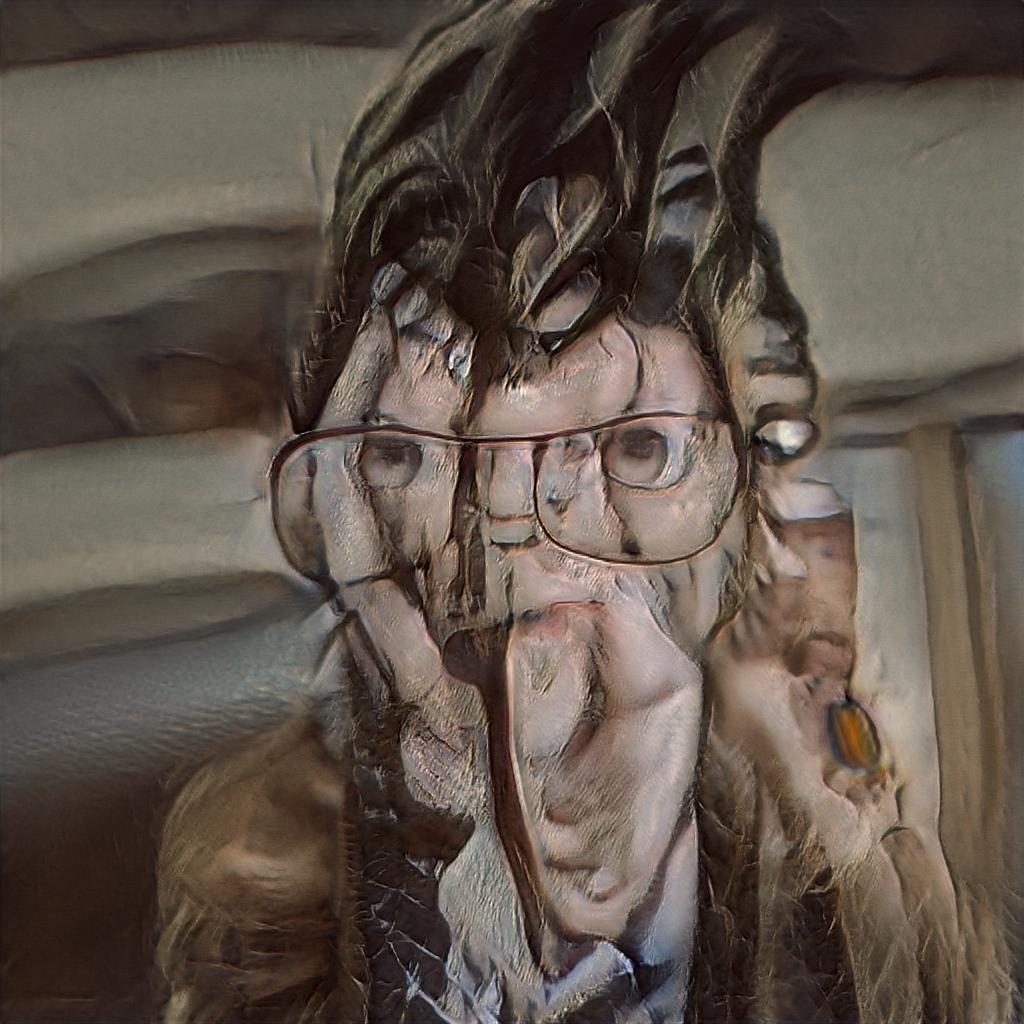

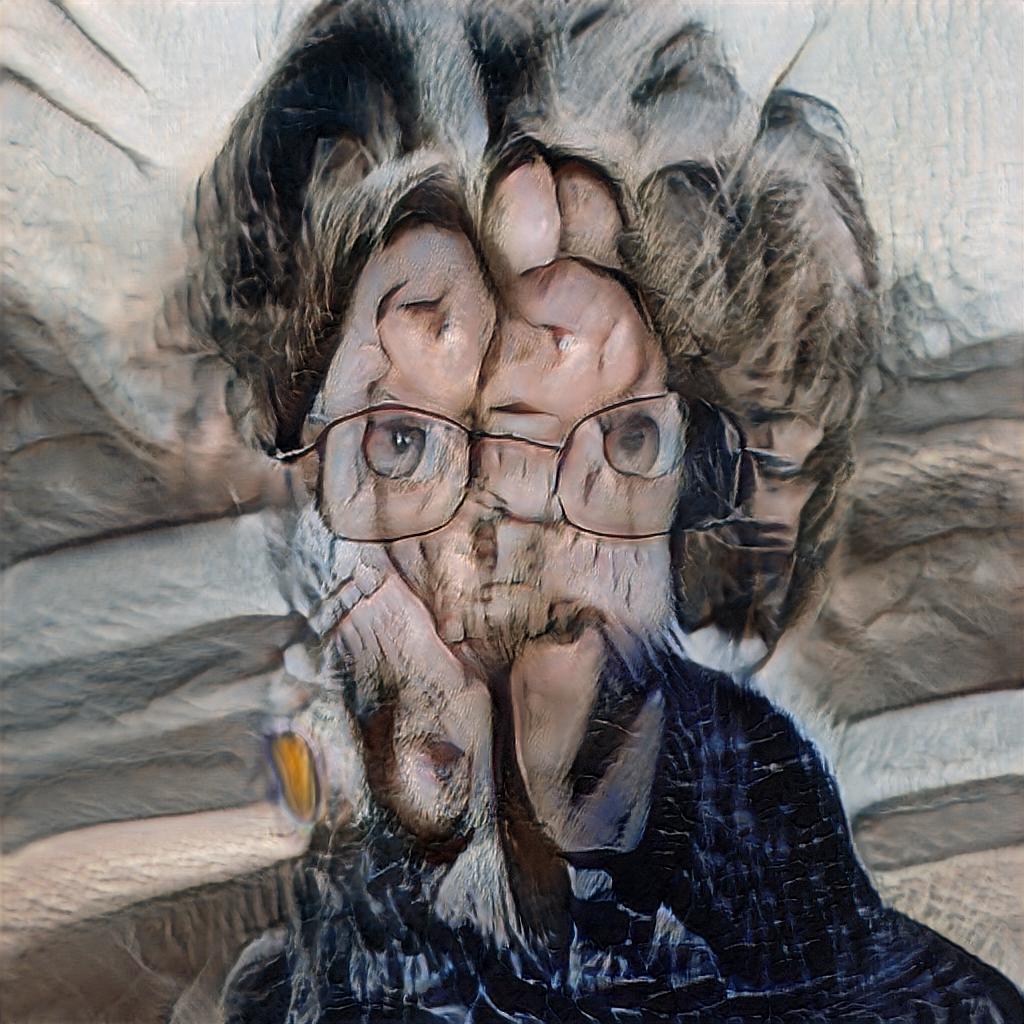

The next model I attempted to train within runway was starting with a standard face trained model. I then fed in photos of my own face on my phone which were detected by apple’s built in facial recognition. I didn’t crop these down or attempt to edit them in any way in order to confuse the existing training. I wanted to see what would be most recognisable to the computer about me. By doing this I can explore its prejudice. Any machine trained specifically by humans will maintain bias. I found that my hairstyle with a mid parting as well as eyes and glasses seemed to be maintained between all of the images.

This final image is the most evocative of how the computer sees me. We view materialism and reproduction in such a passive way. But here we have a computer drawing images of me at my command. Is this scary, exiting or just uncanny?

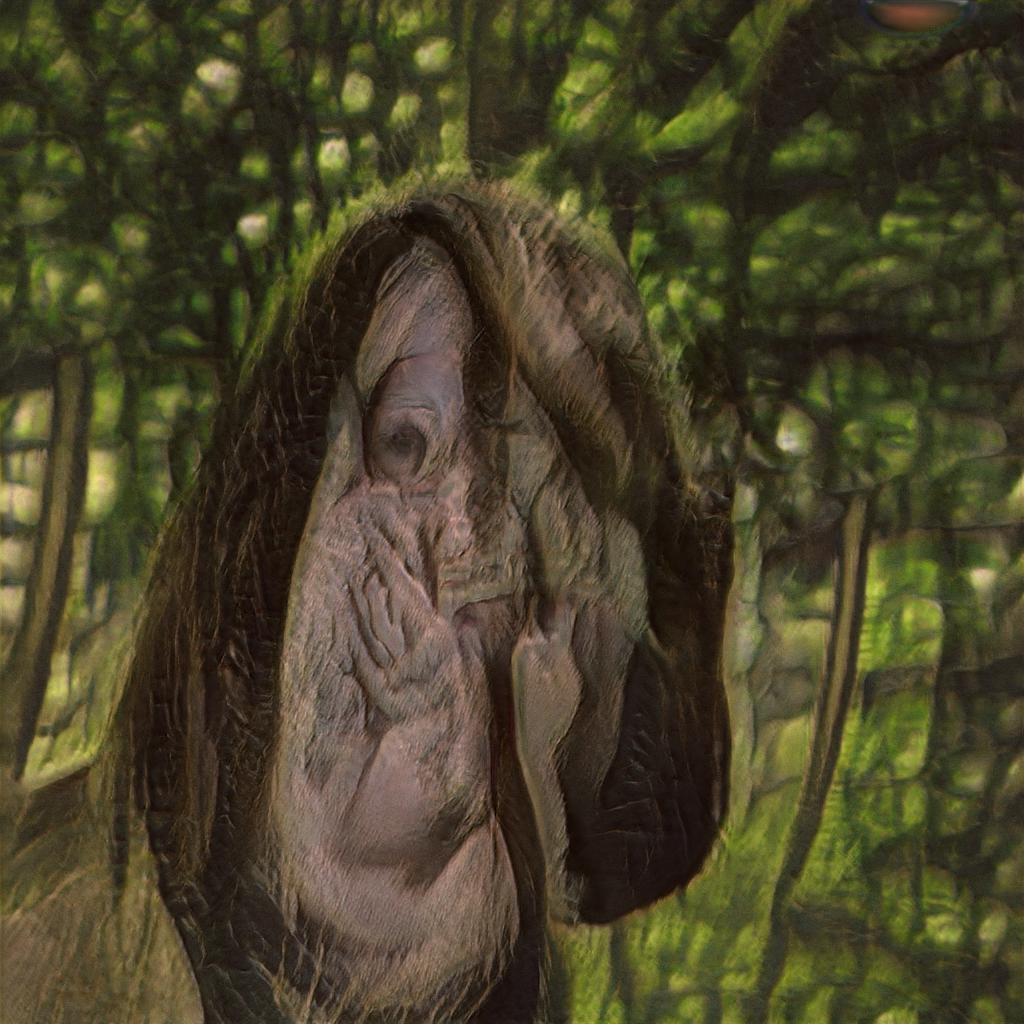

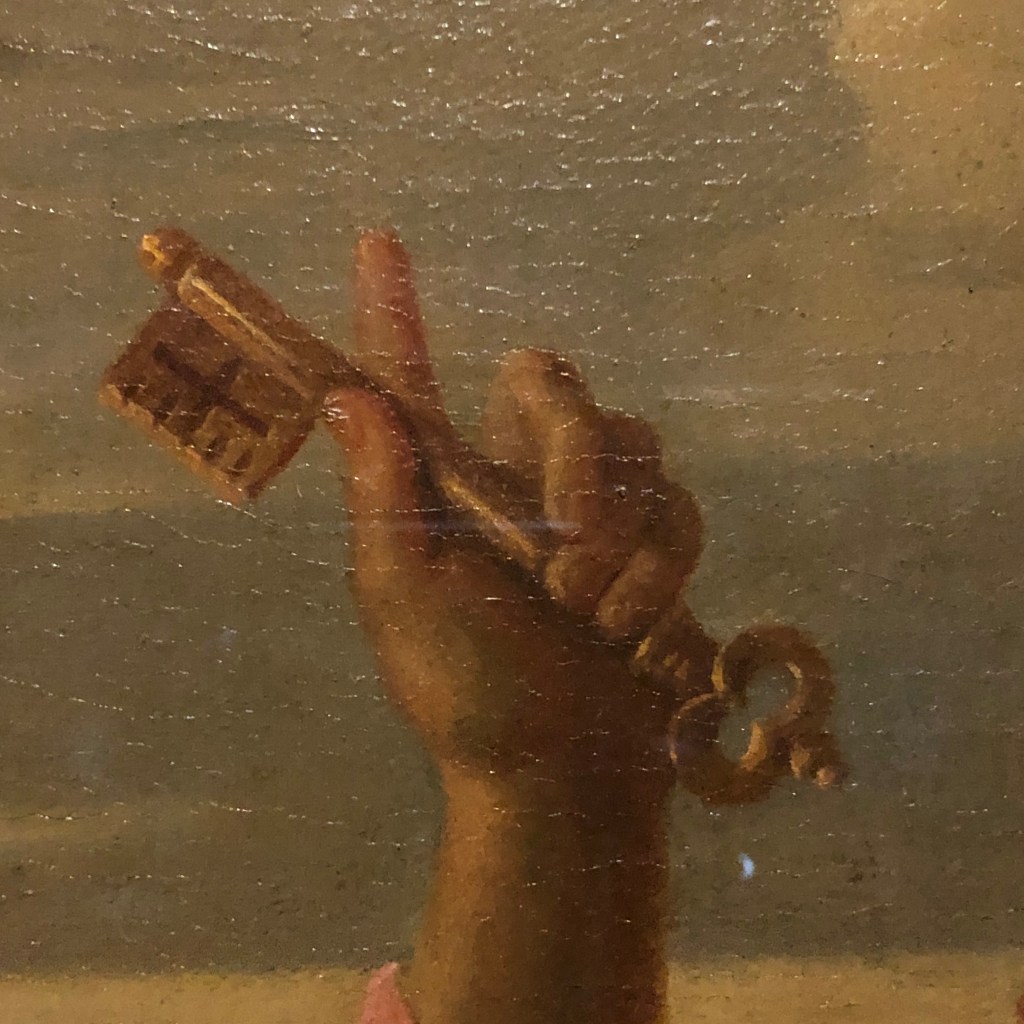

This third runway experiment is based upon objects seen in paintings across portrait galleries across Edinburgh. I find this interesting because of the detail to which these objects are depicted and immortalised. They are so vividly idealised in their nature. You could almost say worshiped. I wanted to comment on this and see what a machine would see of these.

The form displayed in the video is indistinguishable. A machine doesn’t understand the value in these objects as they haven’t been exposed to them for a lifetime. However at moments as shown below, it seems to comprehend the ideas of shape and form. I really enjoy this visual as it almost feels like a mind learning how to comprehend the solid form of objects.